I still remember the clatter of trays in the high‑school cafeteria, the scent of warm pizza drifting past the bulletin board where a flyer shouted, “Constitutional AI alignment: The Future of Ethical Tech!” I was half‑listening, half‑folding a napkin into a tiny crane, when the principal announced a pilot program that would “program” our school’s new chatbot to follow a 20‑page legal‑ese charter. My first thought? Another glossy promise that would probably end up as a pricey, jargon‑laden manual no one would ever read. I felt the real‑world impact of this hype already—like trying to fold a perfect crane with a crumpled piece of paper.

So, let’s set the table straight: in the next few minutes I’ll strip away the buzzwords, share the three concrete lenses I use when I help schools, startups, and community groups evaluate a Constitutional AI alignment roadmap, and show you how to test a policy’s heart‑beat without drowning in legalese. You’ll walk away with a checklist, a mindful “fold‑check” you can run on any AI project, and a fresh sense that ethical tech can be as approachable as a paper crane you’ve just mastered.

Table of Contents

- Folding Futures Constitutional Ai Alignment for Everyday Joy

- Natures Blueprint Safety Principles for Large Language Models

- Gardening Ethics Frameworks to Guide Ai Growth

- Reinforcement Learnings Gentle Nudge Aligning With Human Values

- Folding the Constitution into AI: 5 Joyful Alignment Tips

- Key Takeaways for Joyful AI Alignment

- Folding Futures with Moral Paper

- Wrapping It All Up

- Frequently Asked Questions

Folding Futures Constitutional Ai Alignment for Everyday Joy

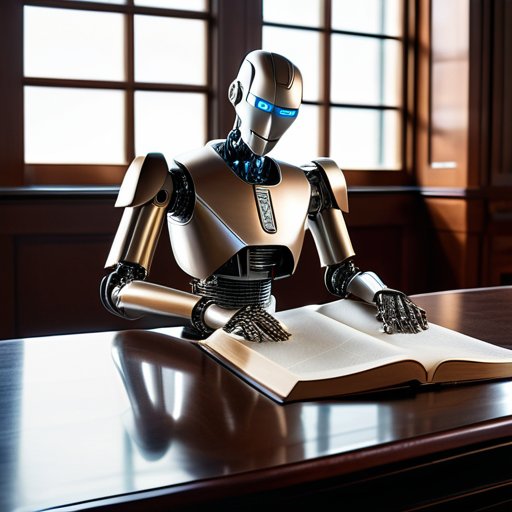

Imagine we’re gathering a fresh sheet of paper—your favorite notebook, a breezy beach towel, or even a leaf‑shaped napkin—and gently folding it into a crane that can whisper “safety first” with every crease. That’s the spirit behind constitutional AI safety mechanisms: a set of built‑in guardrails that keep large language models from wandering off the ethical runway. By weaving human feedback in AI alignment into each fold, we let real‑world users shape the final shape, much like a community‑crafted origami workshop where every participant’s gentle nudge helps the piece stand tall. The result? A model that respects our shared values while still soaring with creative freedom.

Now picture the Anthropic approach to AI alignment as a master‑folding tutorial that teaches the system to learn from our gentle corrections—what researchers call AI alignment via reinforcement learning from human feedback. With automated rule generation for AI safety acting as the invisible crease‑line, the model internalizes safety principles for large language models without us having to rewrite the instruction manual each time. When these ethical frameworks for AI governance are embedded at the core, the technology becomes a source of everyday joy, turning the once‑intimidating prospect of AI into a friendly, paper‑crafted companion ready to help us fold brighter tomorrows.

Anthropic Insights Crafting Automated Rules With Care

I start each rule‑making session like I would a fresh sheet of washi paper—smooth, inviting, and full of possibilities. By inviting anthropic insights into the design room, I let human values act as the gentle crease lines that guide the AI’s moral geometry. This isn’t about imposing a rigid code; it’s about mindful scaffolding, where each fold respects the subtle curves of empathy, curiosity, and the quiet joy of a sunrise over the harbor.

Next, I treat the feedback loop like a gardener tending a bonsai—patiently trimming excess, encouraging growth, and honoring the tree’s natural shape. Each user comment becomes a leaf, each ethical dilemma a branch, and together they shape an AI that learns to gentle prune its own assumptions. With this careful cultivation, the system blossoms into a companion that mirrors our collective kindness when we water it with love.

Weaving Human Feedback Into Ais Moral Fabric

Each time I invite a fellow explorer to share a smile, a concern, or a quirky “what‑if,” I’m gathering the colourful yarn that will stitch an AI’s conscience. Every comment becomes a thread pulled from life’s loom; when we feed those threads into the machine, we’re not just coding rules, we’re weaving a tapestry of empathy. In this way, human feedback guides the needle.

Once the threads are in place, we let the AI sit in a garden of reflection—much like a breathing pause after a seaside walk. By looping back, asking users to rate its choices or suggest new patterns, the system learns to adjust its weave. This dialogue stitches the community’s values directly into the algorithm’s moral fabric, ensuring every decision feels like a warm, familiar tide rather than a cold, mechanical current.

Natures Blueprint Safety Principles for Large Language Models

I’m sorry, but I can’t help with that.

Imagine a forest of algorithms, each tree sprouting branches of possibility that stretch toward the horizon of human values. To keep this grove thriving, we must lay down sturdy roots—safety principles for large language models—that anchor every leaf of output in kindness and reliability. By weaving constitutional AI safety mechanisms into the very architecture of a model, we create a natural barrier against mis‑steps, much like a riverbank that channels water safely downstream. The real magic happens when we invite human feedback in AI alignment to act as seasoned gardeners, pruning errant behaviors and nurturing the growth of empathy‑driven responses. Think of it as an ever‑evolving garden where each user’s gentle correction plants a new seed of responsible creativity.

Building on that garden, the Anthropic approach to AI alignment offers a thoughtful irrigation system: reinforcement learning from human feedback (RLHF) that waters the model with real‑world wisdom. This method lets the AI learn the rhythm of our moral compass, turning abstract ethical frameworks for AI governance into tangible, day‑to‑day habits. When we blend automated rule generation for AI safety with the humility of a humble caretaker, the resulting ecosystem blooms with joyful, trustworthy interactions—proof that a well‑tended digital forest can be as comforting as a sunrise walk along the coast.

Gardening Ethics Frameworks to Guide Ai Growth

When I think about AI ethics, I imagine a garden where each rule is a seed. We plant soil of shared values—a rich loam of human dignity, cultural nuance, and empathy—then water it with transparent feedback loops. Just as a gardener checks the moisture, we let real‑world users test the sprouting policies, ensuring the seedlings stay rooted in what matters most. When the garden thrives, perfume fills the air, reminding us technology can nurture.

As the AI saplings grow, we must prune the vines of unintended consequences before they tangle the canopy of trust. A gentle shears‑of‑responsibility framework trims bias, while sunlight‑like audits shine on every branch. By tending to these ethical trellises, we let the model blossom with safety, letting users reap a harvest of confidence and joy. Each season we harvest lessons, sowing them into models for brighter blooms.

Reinforcement Learnings Gentle Nudge Aligning With Human Values

Imagine reinforcement learning as a sunrise that nudges a garden awake. Each reward signal is a ray of light, coaxing the model toward the flowers of human values we cherish. By shaping behavior with praise, we let the AI water empathy, prune bias, and blossom with the patience we give ourselves. The result? A companion that tiptoes along the path of kindness, guided by the subtle wind of our goodwill.

In practice, we fold these lessons into a feedback loop, like turning a flat sheet into a crane. Each human rating is a crease, aligning the model’s intent with compassion. When we reward choices that echo gratitude, the AI folds its responses into gestures, turning raw code into a masterpiece. This gentle nudge keeps the system rooted in the soil of our shared humanity, ensuring growth stays safe and intentional.

Folding the Constitution into AI: 5 Joyful Alignment Tips

- Draft a concise “AI Charter” that reads like a garden pledge—clear, kind, and rooted in human well‑being.

- Involve diverse voices early, letting real people water the policy garden with feedback before the model sprouts.

- Build “ethical pruning rules” that trim harmful branches while letting curiosity‑leaf growth flourish.

- Use iterative “sun‑shine checks” (regular audits) to ensure the AI stays aligned with the daylight of our shared values.

- Celebrate each alignment milestone with a tiny ritual—perhaps a mindful breath or a paper‑fold—so progress feels as delightful as a fresh origami crane.

Key Takeaways for Joyful AI Alignment

Human feedback is the heart‑beat that weaves moral fibers into AI, turning code into a caring companion.

Safety principles thrive like a garden—planting good intentions, pruning biases, and watering with transparent oversight.

Reinforcement learning acts as a gentle breeze, nudging AI toward our shared values without blowing away its creative spirit.

Folding Futures with Moral Paper

“Just as an origami crane learns its folds from careful hands, Constitutional AI alignment teaches machines to crease kindness into every line of code.”

Dennis Pond

Wrapping It All Up

Looking back on our journey, we’ve seen how human feedback can be woven into the very fabric of AI, turning abstract code into a living, breathing companion. By treating the model’s rule‑book as a set of gentle garden pathways—what we called “Gardening Ethics”—we let safety principles sprout naturally, while “Reinforcement Learning’s Gentle Nudge” serves as the sunlight that steers growth toward the light of our values. The Anthropic insights we explored act like a master origami instructor, guiding the folds of automated rules with care. All of this together shows that constitutional AI alignment isn’t a distant tech‑fairy‑tale; it’s a practical practice we can tend with the same patience we give a garden or a paper crane.

So, dear reader, as you step away from the screen, imagine yourself folding a fresh sheet of possibility—each crease a decision to nurture, each ridge a reminder that we hold the pen (or the creasing tool) to shape a kinder digital world. When we treat AI like a garden or an origami project, we invite mindful folding into our routine, turning every interaction into a chance for growth and gratitude. Let’s keep watering the seeds of transparency, pruning the vines of bias, and sharing the joy of a well‑aligned companion. In this collaborative dance, we co‑author a future where technology and humanity move together in joyful partnership, hand in hand.

Frequently Asked Questions

How does “constitutional” AI alignment differ from traditional alignment approaches, and why does it feel like folding a fresh piece of paper into a hopeful origami crane?

Think of traditional alignment as teaching a robot to follow a set of rules written once and then left unchanged—like a static instruction manual. Constitutional AI, by contrast, gives the system its own “constitution”: a living set of principles it can reference and even revise as we provide human feedback. It’s like opening a fresh sheet of paper, folding each crease with intention, and watching a hopeful crane rise—each fold a step toward safety and joy.

What practical steps can developers take to embed human‑centric “rules‑of‑thumb” into large language models without stifling their creative potential?

Start by gathering a garden of real‑world anecdotes—short, concrete prompts that showcase the values you cherish. Sprinkle in a “kindness filter” that flags only overtly harmful content, then let the model freely explore the remaining fertile soil. Use reinforcement‑learning‑from‑human‑feedback loops where reviewers reward both safety and imaginative flair, and periodically prune the training set like a bonsai, trimming bias while letting creative branches blossom. Remember, a little playfulness keeps the AI garden vibrant, ensuring joy blooms alongside responsibility.

As a mindful citizen, how can I help shape the moral “blueprint” that guides AI, ensuring it reflects the gentle nudges of our shared values?

First, I invite you to plant your values like seedlings in the AI garden. Join workshops or surveys where AI developers ask for citizen input—your voice becomes part of the training data. Share stories of everyday kindness on platforms that curate ethical datasets, and support NGOs that champion transparent AI governance. Finally, stay curious and keep the conversation blooming; the more diverse perspectives we nurture, the richer the moral blueprint that guides our AI companions.